The Avocado Armchair

Reflections on generative AI, and a farewell to Replicate's Berkeley office

I met Ben Firshman in Berkeley in the spring of 2021. He was starting a new company called Replicate with his friend and former colleague Andreas Jansson, with the goal of making machine learning more accessible to software engineers. They knew I’d worked on a lot of “make hard stuff easy” projects like Swagger, Heroku, npm, and Electron, and they wanted me to join their new company as the first employee. I didn’t have any experience with machine learning at the time, but I could see these two bright and bushy-tailed hackers had twinkles in their eyes. They’d been around the block and seemed to have a tingling spidey-sense that AI was about to be a really big deal.

But I was still skeptical. I had experienced some of the magic of AI through tools like GitHub Copilot, a programming assistant with an uncanny ability to autocomplete my code as I wrote it. But the state of AI-powered image generation at the time was still pretty unimpressive to me. Ben and Andreas were so excited about early text-to-image models but to me, most of that that stuff mostly looked like incomprehensible piles of eyeballs, and I just didn’t get what all the fuss was about.

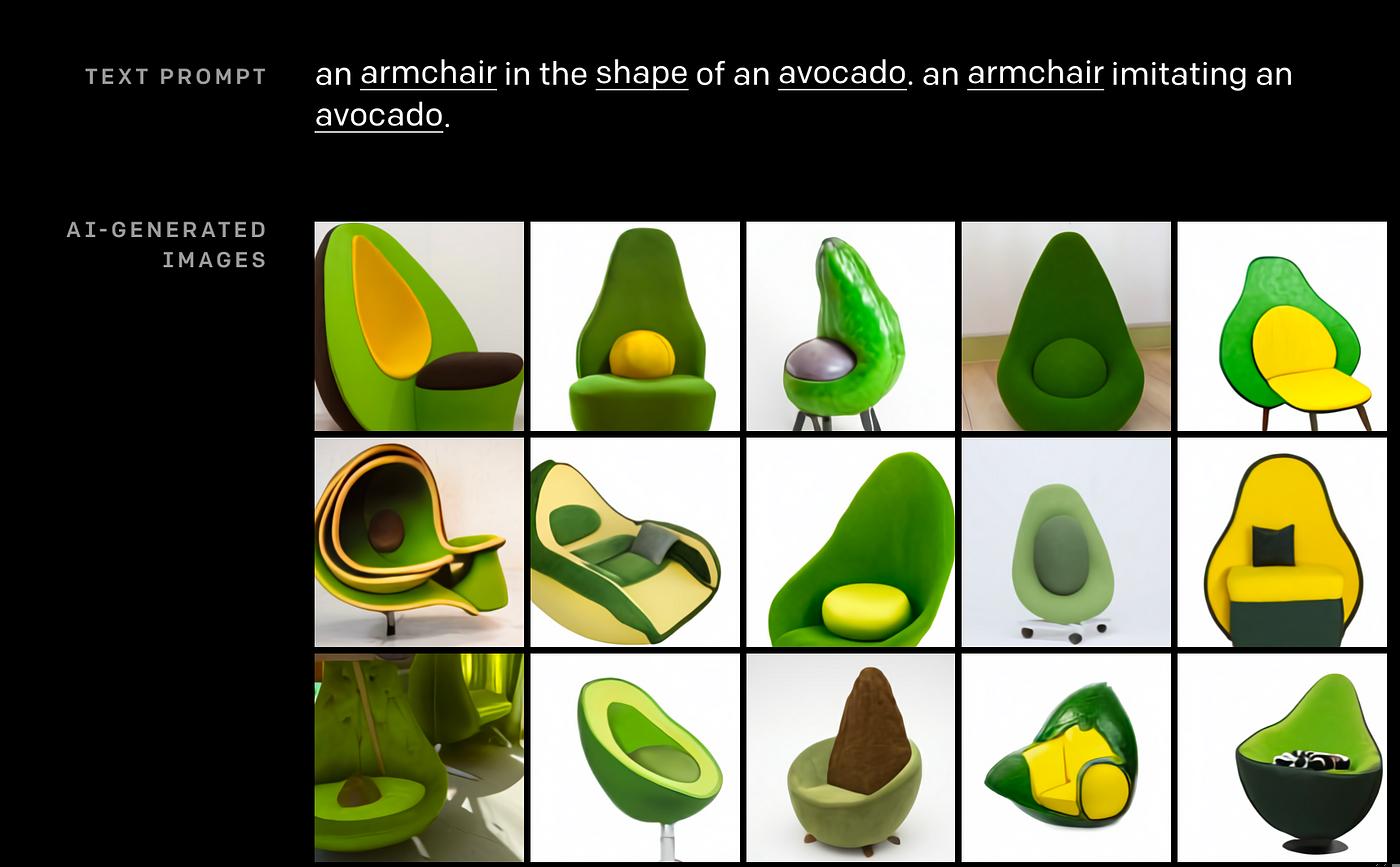

But then they showed me DALL·E, a brand new image generation model from OpenAI. Unlike everything that came before it, OpenAI’s new model could take a text prompt and generate high quality images, even of never-before-seen things like “an avocado in the shape of an armchair”.

I remember the moment I saw my first avocado armchair. I was in Replicate’s Berkeley office, a lovely sunlit space in an old brick building, perched above the Hidden Cafe and the Strawberry Creek Park. Ben was sitting at his computer, and I was leaning over his shoulder as he pulled up the DALL·E website. The images of the avocado armchair were striking. What was this sorcery? How could a computer program conjure these kinds of novel images from the ether?

These avocado armchairs showed me how much the worlds of software, design, art, and visual communication were about to change forever. I was finally starting to see what Ben and Andreas were so excited about. So, in the fall of 2021, I left my cushy job at GitHub and joined the Replicate team.

Ben and I share a love of junk joints and found items, and Replicate’s Berkeley office was a testament to that. Much of the furniture and decor for the space was sourced from places like Urban Ore and the El Cerrito Recycling Center, and some of it was even found right on the street, including our very own dining table and a set of “avocado armchairs”. For me, these real-life avocado armchairs were a token of many of our company’s shared ideals: artistic expression, creative reuse, and absurdity.

Now it’s two years later, and a lot of what we envisioned in the early days of Replicate has come to pass. Replicate has grown a lot, largely because of Ben and Andreas's foresight about the "Stable Diffusion moment", an important milestone in recent AI history when open-source image generation models started to rival the capabilities of closed-source models like OpenAI's DALL·E. More AI development is happening in the open, with companies like Meta and Stability open-sourcing their models so the community can experiment and use them in unexpected new ways. There are now scores of open-source image generation models, all of them capable of rendering avocado armchairs with much higher fidelity than what we saw from OpenAI’s closed-source model.

Replicate is growing up. We now have a team of more than 30 people, and we're building a product used by millions of people. Part of that growth means we're moving out of our Berkeley office and into a new space in San Francisco. I'm sad to say goodbye to our avocado armchairs, but I'm excited to see what the future holds for Replicate and the world of AI.